Computers

Microsoft is rolling out an icon redesign for Windows 10

And a tonne of other changes!

Windows operating system, as we know it, is more than two decades old. A lot has changed, but a lot still hasn’t. In what can be called a revamp of the UI, Microsoft is finally changing the icons on an app called File Explorer, after a very long sabbatical.

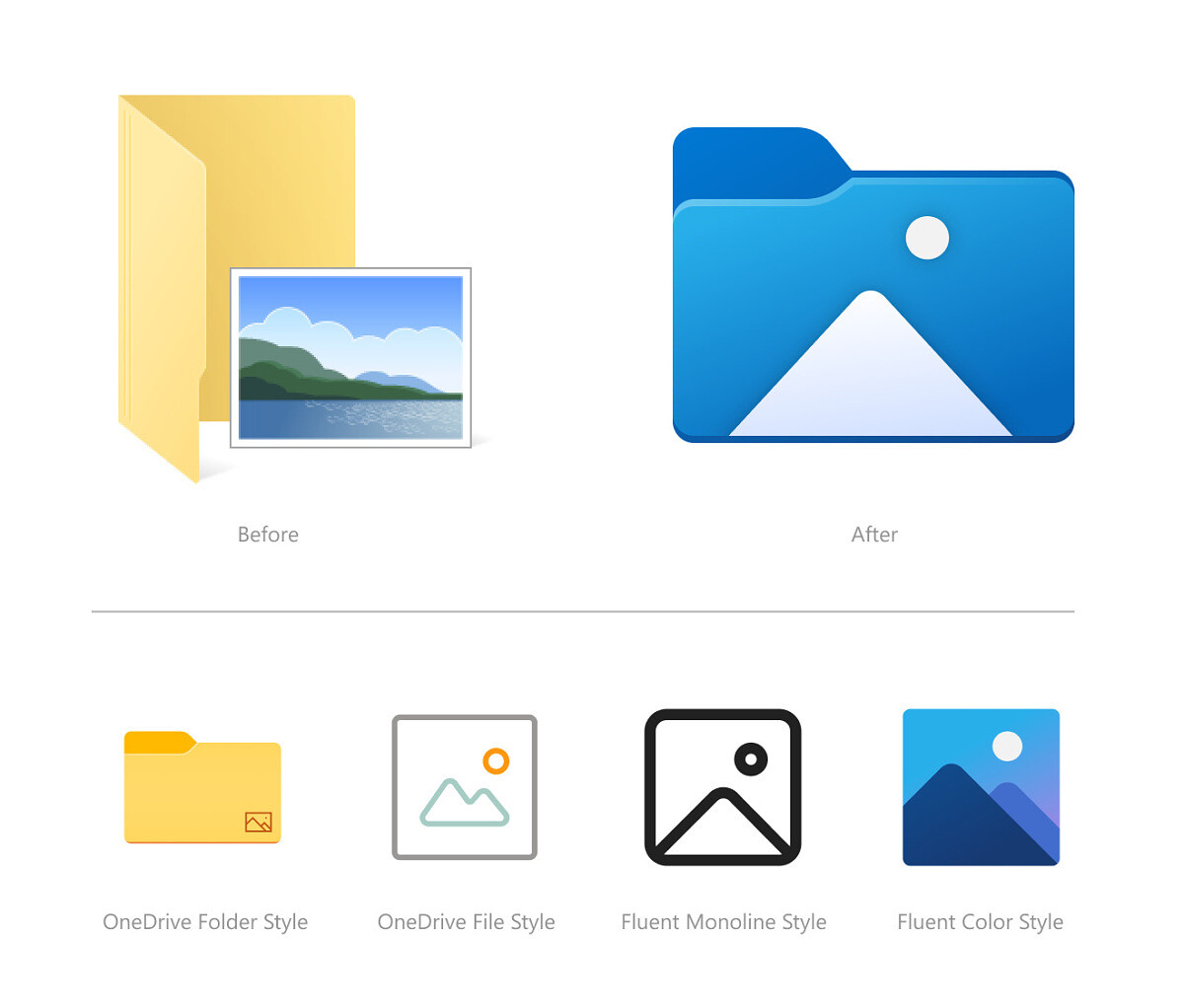

The first and most prominent change is the addition of new icons in File Explorer. Microsoft has slowly made changes to the icons in Windows 10 over the last year, recently changing the icon for Notepad.

“Several changes, such as the orientation of the folder icons and the default file type icons, have been made for greater consistency across Microsoft products that show files,” says Amanda Langowski, Microsoft’s Windows Insider chief. “Notably, the top-level user folders such as Desktop, Documents, Downloads, and Pictures have a new design that should make it a little easier to tell them apart at a glance.”

Although this might not be a major change when it comes to the entire operating system, it is one of the ways how Microsoft is showing the modern look. Microsoft’s changelog for Windows 10 Insider Preview Build 21343 is long and exhaustive and includes several changes and improvements.

And most importantly, this tiny change shows us how software delivery has changed since its inception. From burning the OS on a CD to directly downloading unlimited OTA updates, Windows 10 is truly the last desktop operating system from Microsoft.

The operating system continues to receive endless updates that sometimes bring major changes, like moving the Internet Explorer browser to Edge, to smaller changes that are most-often go unnoticed.

Here’s a list of all the changes or improvements included in the Windows 10 build 21343:

- We’re changing the name of the Windows Administrative Tools folder from Start to Windows Tools. We are working to better organize all the admin and system tools in Windows 10.

- [News and interests] Update on the rollout: following our last update on languages and markets, this week we’re also introducing the experience to China! We continue to roll out news and interests to Windows Insiders, so it isn’t available to everyone in the Dev Channel just yet.

- We are now rolling out the new IME candidate window design to all Windows Insiders in the Dev Channel using Simplified Chinese IMEs.

- We’re updating the “Get Help” link in the touch keyboard to now say “Learn more”.

- We’re updating File Explorer when renaming files to now support using CTRL + Left / Right arrow to move your cursor between words in the file name, as well as CTRL + Delete and CTRL + Backspace to delete words at a time, like other places in Windows.

- We’ve made some updates to the network-related surfaces in Windows so that the displayed symbols use the updated system icons we recently added in the Dev Channel.

- Based on feedback, if the Shared Experiences page identifies an issue with your account connection, it will now send the notifications directly into the Action Center rather than repeated notification toasts that need to be dismissed.

MINIX has launched the T4000 and T5000 Generative AI Mini Workstations.

These powerful and space-saving solutions are built for professional generative AI, local large language model (LLM) inference, content creation, on-premise enterprise deployment, and lightweight model training.

The desktops are powered by the NVIDIA Jetson AGX Thor series modules with flagship Blackwell architecture. As such, they deliver exceptional on-device AI horsepower in a small desktop form factor.

The build features durable metal and plastic chassis, plus twin turbo intercooler for sustained performance.

The new offerings are engineered for professionals, developers, creators, and IT teams, redefining edge and on-premise AI without bulky server hardware.

At the core of the T4000 and T5000 are NVIDIA’s cutting-edge compute platform:

- T4000: Up to 1200 Sparse FP4 TFLOPs AI performance

- T5000: Up to 2070 Sparse FP4 TFLOPs AI performance

- 1536-2560 Blackwell GPU with fifth-generation Tensor Cores

- Multi-Instance GPU (MIG) for parallel task efficiency

- NVIDIA PVA 3.0 dedicated vision processing engine

The workstations natively support smooth local inference for 7B-70B parameter LLMs. This makes private, low-latency AI accessible for businesses and creators.

In addition, the offerings feature high-core-count Arm processing and large, fast memories of up to 128GB DDR5 on 12-core or 14-core Arm Neoverse-V3AE 64-bit CPU.

Designed for professional workflows, the mini workstations also include enterprise-grade networking and flexible expansion:

- Dual 10GbE ethernet

- Wi-Fi 6E

- Bluetooth 5.3

- 2x HDMI 2.1 TMDS (4K@60Hz)

- 4x USB 3.2 Gen 1 Type-A

- 1x USB 3.2 Gen 2 Type-C

- 24V DC input, up to 200W max power

Ideal use cases for the MINIX T4000 and T5000 include local LLM inference, generative AI creation, on-device AI computing, and lightweight model training.

Computers

Lenovo accelerates production-ready enterprise AI with NVIDIA

From AI inferencing to gigawatt-scale AI factories

Lenovo has unveiled new Lenovo Hybrid AI Advantage with NVIDIA solutions designed to accelerate AI adoption, reduce time-to-first-token (TTFT), and deliver measurable business results across personal, enterprise, and cloud environments.

Building on the inferencing acceleration introduced at Lenovo Tech World, this next phase of Hybrid AI execution expands the solutions with device to data center to gigawatt-scale AI cloud deployments.

This enables real-time decision-making, operational efficiency, and intelligent automation across industries at global scale. The solutions boost productivity, agility, and innovation by enabling faster AI deployment.

The development comes as AI is seen moving from training models powering real-time decisions. Lenovo is prepared to address the demand for validated hybrid AI platforms built for production-scale inferencing, as organizations will need infrastructure to support such.

In fact, Lenovo’s Hybrid AI Advantage with NVIDIA are now delivering ROI in less than six months. The new inferencing-optimized ThinkSystem and ThinkEdge servers are being utilized for real-time inferencing across retail, manufacturing, healthcare, sports, and smart city scenarios.

The expanded portfolio includes:

- two Lenovo Hybrid AI platforms, featuring NVIDIA RTX PRO 6000 Blackwell Server Edition and Blackwell Ultra

- Hybrid AI inferencing starter platform with RTX PRO 4500 Blackwell Server Edition

- Lenovo ThinkAgile HX650a with Nutanix Enterprise AI and Nutanix Kubernetes Platform

- Lenovo Hybrid AI platforms with Cloudian

Bringing inferencing directly to professionals

Lenovo and NVIDIA are bringing AI from development environments to real-world production at a global scale. This is thanks to new Lenovo AI inferencing platforms with NVIDIA Dynamo and NVIDIA NIM.

Meanwhile, Lenovo AI Cloud gigafactory platforms are powered by NVIDIA Vera Rubin NVL72. Industry-specific agentic AI solutions are also built with NVIDIA Blueprints and software.

For consumers, there’s next-generation NVIDIA RTX Pro Blackwell-powered mobile and desktop workstations. These will be rolled out across the ThinkPad P14s Gen 7, ThinkPad P16s Gen 5, and ThinkPad P1 Gen 1 lineups.

ThinkStation P5 Gen 2 desktops, meanwhile, will get up to two RTX PRO 6000 Blackwell Max-Q GPUs. They will also have support for NVIDIA OpenShell.

For gigawatt-scale scenarios, the next-gen Vera Rubin platform accelerates deployment for hyperscale and sovereign AI cloud providers.

These fully liquid-cooled, rack-scale AI systems are engineered for faster deployment and dramatically improved token economics. They can achieve up to 10x higher throughput and up to 10x lower cost per token.

Computers

CIPTA debuts AI GPU server, edge workstation at CloudFest 2026

Malaysia-made AI infrastructure

CIPTA Industrial Sdn Bhd steps onto the global stage with its European debut at CloudFest 2026. They introduced high-density AI infrastructure and edge-ready systems built for modern enterprise workloads.

Held at Europa-Park in Rust, Germany from March 23 to 26, the event marks the company’s first major international showcase under its own brand. Backed by InWin Development Inc., CIPTA positions itself as a new-generation EMS provider focused on AI, cloud, and enterprise systems.

At Booth R41, the company is highlighting two key platforms: the RG658 PRO GPU server developed with Phison, and the cubePRO edge workstation created in collaboration with Accordance.

Built for scalable AI workloads

Leading the showcase is the RG658 PRO, a high-density GPU server designed to handle large-scale AI training and inference without pushing costs out of reach for enterprises.

The system supports up to eight high-performance GPUs and integrates Phison’s Pascari aiDAPTIV alongside its PASCARI enterprise SSD lineup. This combination aims to improve data throughput, reduce latency, and streamline AI pipelines.

Thermal performance is a key focus. The RG658 PRO uses a dual-chamber design to separate heat zones, paired with up to 14 high-speed PWM fans for sustained cooling under heavy workloads. Power delivery is handled by a 3+1 redundant configuration of 80PLUS Titanium PSUs, scaling up to 9600W.

The result is a platform built to scale AI deployments on-site while maintaining efficiency and reliability.

Edge computing without downtime

Alongside its GPU server, CIPTA is introducing the cubePRO, a compact edge workstation designed for environments where uptime and data integrity are critical.

The system supports up to four PCIe slots for GPU configurations, making it suitable for AI workloads at the edge. It also features high-capacity multi-SSD setups and optimized airflow for continuous 24/7 operation.

Through its partnership with Accordance, the cubePRO integrates the Disk Array ARAID M500 solution, enabling high-availability storage and data protection. This ensures uninterrupted performance for use cases such as industrial systems, remote nodes, and enterprise branch deployments.

The focus here is clear: bring AI processing closer to where data is generated, without sacrificing reliability.

Strengthening Malaysia’s role in AI infrastructure

CIPTA’s debut also reflects a broader shift in global supply chains. Operating from Malaysia, the company offers end-to-end services—from concept to production—along with flexible manufacturing cycles and cost-efficient operations tailored for Southeast Asia and international markets.

With access to InWin’s server chassis ecosystem and infrastructure solutions, CIPTA combines global platform capabilities with localized integration. The goal is to help enterprises deploy AI and cloud infrastructure faster while diversifying their supply chain footprint.

As demand for AI systems continues to grow, CIPTA is positioning Malaysia as a key hub for scalable, production-ready infrastructure.

Visitors can find CIPTA at Booth R41 during CloudFest 2026 in Europa-Park, Rust, Germany.

-

Gaming2 weeks ago

Gaming2 weeks agoLevel Infinite launches Gangstar Mirage City exclusively in PH

-

News2 weeks ago

News2 weeks agoThis rumored iPhone 18 color will make you switch phones

-

Reviews2 weeks ago

Reviews2 weeks ago5 games with the nubia Neo 5 GT 5G

-

Singapore7 days ago

Singapore7 days agovivo Y Series launches in Singapore with bigger battery, durability upgrades

-

Convenient Smart Home2 weeks ago

Convenient Smart Home2 weeks agoGiving up counter space for reverse osmosis: Living with Waterdrop M6H in NYC

-

Automotive2 weeks ago

Automotive2 weeks agoThe VinFast VF6 is perfect for urban travelers

-

Gaming2 weeks ago

Gaming2 weeks agoThe Blood of Dawnwalker launches September 3

-

Gaming2 weeks ago

Gaming2 weeks agoThe Steam Controller is coming out on May 4