Explainers

Why is USB Type-C so important?

Over the past decade, devices using the Universal Serial Bus (USB) standard have become part of our daily lives. From transferring data to charging our devices, this standard has continued to evolve over time, with USB Type-C being the latest version. Here’s why you should care about it.

First, here’s a little history

Chances are you’ve encountered devices that have a USB port, such as a smartphone or computer. But what exactly is the USB standard? Simply put, it’s a communication protocol that allows devices to communicate with other devices using a standardized port or connector. It’s basically what language is for humans.

When USB was first introduced to the market, the connectors used were known as USB Type-A. You’re likely familiar with this connector; it’s rectangular and can only be plugged in a certain orientation. To be able to make a connection, a USB Type-A connector plugs into a USB Type-A port just like how an appliance gets connected to a wall outlet. This port usually resides on host devices such as computers and media players, while Type-A connectors are usually tied to peripherals such as keyboards or flash drives.

There are also USB Type-B connectors, and these usually go on the other end of a USB cable that plugs into devices like a smartphone. Due to the different sizes of external devices, there are a few different designs for Type-B connectors. Printers and scanners use the Standard-B port, older digital cameras and phones use the Mini-B port, and recent smartphones and tablets use the Micro-B port.

Samples of the different USB Type-B connectors. From left to right: Standard-B, Mini-B, and Micro-B (Image credit: Amazon)

Specifications improved through the years

Aside from the type of connectors and ports, another integral part of the USB standard lies in its specifications. As with all specifications, these document the capabilities of the different USB versions.

The first-ever version of USB, USB 1.0, specified a transfer rate of up to 1.5Mbps (megabits per second), but this version never made it into consumer products. Instead, the first revision, USB 1.1, was released in 1998. It’s also the first version to be widely adopted and is capable of a max transfer rate of up to 12Mbps.

The next version, USB 2.0, was released in 2000. This version had a significantly higher transfer rate of up to 480Mbps. Both versions can also be used as power sources with a rating of 5V, 500mA or 5V, 100mA.

Next up was USB 3.0, which was introduced in 2008 and defines a transfer rate of up to 5Gbps (gigabits per second) — that’s a tenfold increase from the previous version. This feat was achieved by doubling the pin count or wires to make it easier to spot; these new connectors and ports are usually colored blue compared to the usual black/gray for USB 2.0 and below. USB 3.0 also improves upon its power delivery with a rating of 5V, 900mA.

In 2013, USB was updated to version 3.1. This version doubles what USB 3.0 was capable of in terms of bandwidth, as it’s capable of up to 10Gbps. The big change comes in its power delivery specification, now providing up to 20V, 5A, which is enough to power even notebooks. Apart from the higher power delivery, power direction is bidirectional this time around, meaning either the host or peripheral device can provide power, unlike before wherein only the host device can provide power.

Here’s a table of the different USB versions:

| Version | Bandwidth | Power Delivery | Connector Type |

| USB 1.0/1.1 | 1.5Mbps/12Mbps | 5V, 500mA | Type-A to Type-A,

Type-A to Type-B |

| USB 2.0 | 480Mbps | 5V, 500mA | Type-A to Type-A,

Type-A to Type-B |

| USB 3.0 | 5Gbps | 5V, 900mA | Type-A to Type-A,

Type-A to Type-B |

| USB 3.1 | 10Gbps | 5V, up to 2A,

12V, up to 5A, 20V, up to 5A |

Type-C to Type-C,

Type-A to Type-C |

Now that we’ve established the background of how USB has evolved from its initial release, there are two things to keep in mind: One, each new version of USB usually just bumps its transfer rate and power delivery, and two, there haven’t been any huge changes regarding the ports and connectors aside from the doubling of pin count when USB 3.0 was introduced. So, what’s next for USB?

USB Type-C isn’t your average connector

After USB 3.1 was announced, the USB Implementers Forum (USB-IF) who handles USB standards, followed it up with a new connector, USB Type-C. The new design promised to fix the age-old issue of orientation when plugging a connector to a port. There’s no “wrong” way when plugging a Type-C connector since it’s reversible. Another issue it addresses is how older connectors hinder the creation of thinner devices, which isn’t the case for the Type-C connector’s slim profile.

Here’s how a USB Type-C connector looks like. Left: Type-A to Type-C cable, Right: Type-C to Type-C cable (Image credit: Belkin)

From the looks of it, the Type-C connector could become the only connector you’ll ever need in a device. It has high bandwidth for transferring 4K content and other large files, as well as power delivery that can power even most 15-inch notebooks. It’s also backwards compatible with previous USB versions, although you might have to use a Type-A-to-Type-C cable, which are becoming more common anyway.

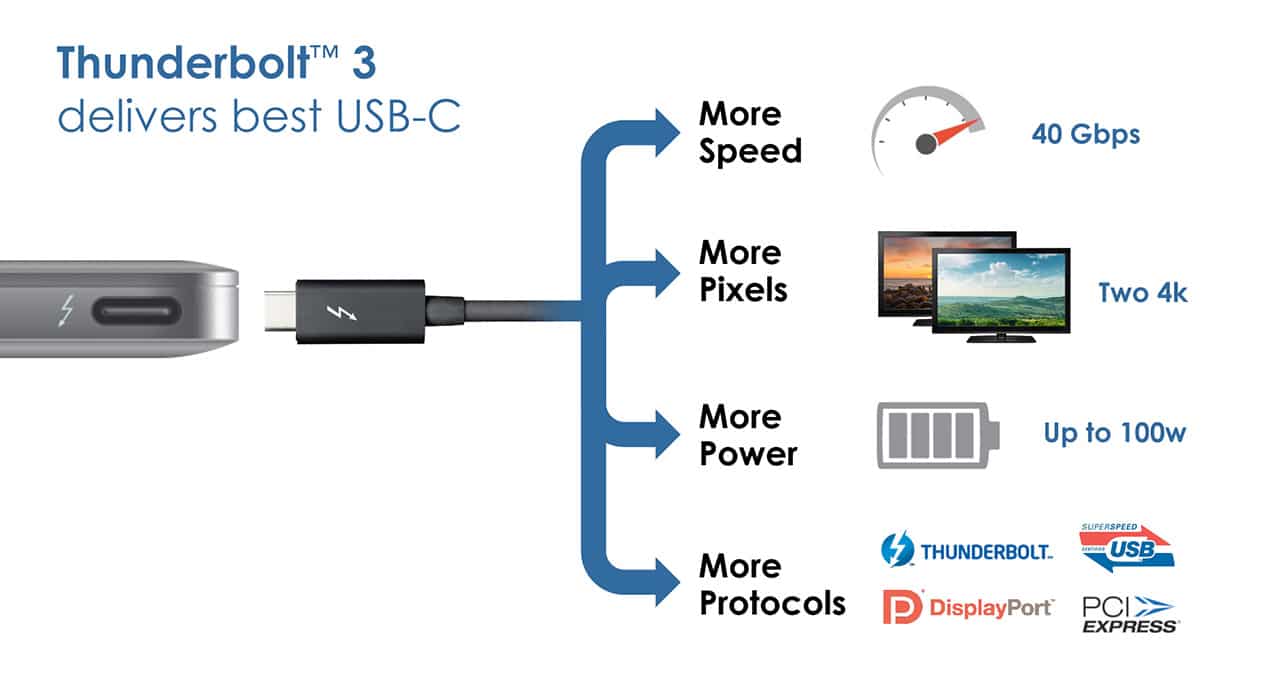

Another big thing about USB Type-C is that it can support different protocols in its alternate mode. As of last year, Type-C ports are capable of outputting video via DisplayPort or HDMI, but you’ll have to use the necessary adapter and cable to do so. Intel’s Thunderbolt 3 technology is also listed as an alternate mode partner for USB Type-C. If you aren’t familiar with Thunderbolt, it’s basically a high-speed input/output (I/O) protocol that supports the transfer of both data and video on a single cable. Newer laptops have this built in.

A USB Type-C Thunderbolt 3 port (with compatible dock/adapter) does everything you’ll ever need when it comes to I/O ports (Image credit: Intel)

Rapid adoption of the Type-C port has already begun, as seen on notebooks such as Chromebooks, Windows convertibles, and the latest Apple MacBook Pro line. Smartphones using the Type-C connector are also increasing in number.

Summing things up, the introduction of USB Type-C is a huge step forward when it comes to I/O protocols, as it can support almost everything a consumer would want for their gadgets: high-bandwidth data transfer, video output, and charging.

SEE ALSO: SSD and HDD: What’s the difference?

[irp posts=”9623″ name=”SSD and HDD: What’s the difference?”]

With a huge change in naming scheme, Apple promises a big leap ahead.

The all-new Liquid Glass design isn’t just breath of fresh air — it’s a bold redesign ever since the iOS 7 came out.

It’s not limited just to the iPhone. It’s coming to the iPad, Mac, and even the Apple Watch!

And with latest Public Beta now available across all devices, now is the perfect time to try ’em out.

Here are our favorite features, design updates, and hidden gems in Apple’s latest OS 26 series of software updates.

Namely iOS 26, iPadOS 26, watchOS 26, visionOS 26, and macOS Tahoe.

Shopping for a new Windows laptop?

With so many choices out there, it can be tough to know where to start.

Should you prioritize performance, battery life, or portability? Can you find something affordable and premium-looking?

In this video, we break down everything you need to know about the new Snapdragon X Series laptops — a new category of Windows PCs that promise power, efficiency, and sleek design in one package.

Whether you’re a student, creative, or just looking to upgrade, this buyer’s guide will help you decide if a Snapdragon X Series laptop is right for you.

Apple dropped a ton of surprises in this year’s WWDC — and there’s more than just the Liquid Glass redesign.

From Workout Buddy, to game-changing Apple Intelligence features.

All the way to collaborative visionOS updates, and the next generation of iOS 26, macOS 26, and iPadOS 26.

Watch to see the top announcements NEED to know from the WWDC 2025 keynote!

Here are Michael Josh’s biggest takeaways from WWDC 2025 in one video — with the help of some TechTuber friends!

-

Singapore1 week ago

Singapore1 week agovivo Y Series launches in Singapore with bigger battery, durability upgrades

-

Automotive2 weeks ago

Automotive2 weeks agoThe VinFast VF6 is perfect for urban travelers

-

Gaming2 weeks ago

Gaming2 weeks agoThe Blood of Dawnwalker launches September 3

-

Gaming2 weeks ago

Gaming2 weeks agoFinal Fantasy VII Rebirth demo out now on Switch 2 and Xbox

-

Gaming1 week ago

Gaming1 week agoPRAGMATA is not for the faint of heart

-

Features2 weeks ago

Features2 weeks agoA Galaxy summer to remember

-

Laptops5 days ago

Laptops5 days agoSpotlight: ASUS Zenbook A16

-

Gaming1 week ago

Gaming1 week agoStar Wars: Galactic Racer launches October 6