Features

Apple’s Portrait Lighting function allows for pro studio effects

Apple wastes no time in showing off what their hardware can do with their newest smartphone features. One specific function caught my eye — and for good reason. The newly introduced Portrait Lighting mode promises casual smartphone users like yours truly to capture photographs and recreate studio lighting effects on the new iPhones.

Portrait Lighting is available on Apple’s newest iPhones, the 8, 8 Plus, and X; the dual-camera technology coupled with the new chips allow for this new capability.

How does it work, exactly? According to the announcement, the iPhone cameras sense the scene and create a depth map that separate the subject from the background. Machine learning then creates facial landmarks and changes the lighting on the contours of your face. Apple stresses that the effect isn’t a filter; it’s the result of real-time analysis as the photo is being taken.

What does that all mean? Simply that iPhone users are about to get portraits with really great lighting — if we can afford the new iPhones.

Portrait Lighting mode choices include natural light, stage light, contour light, and a monochrome light setting.

SEE ALSO: Apple’s Animojis are the future of emojis

[irp posts=”20194″ name=”iPhone X is the result of ten years of Apple engineering”]

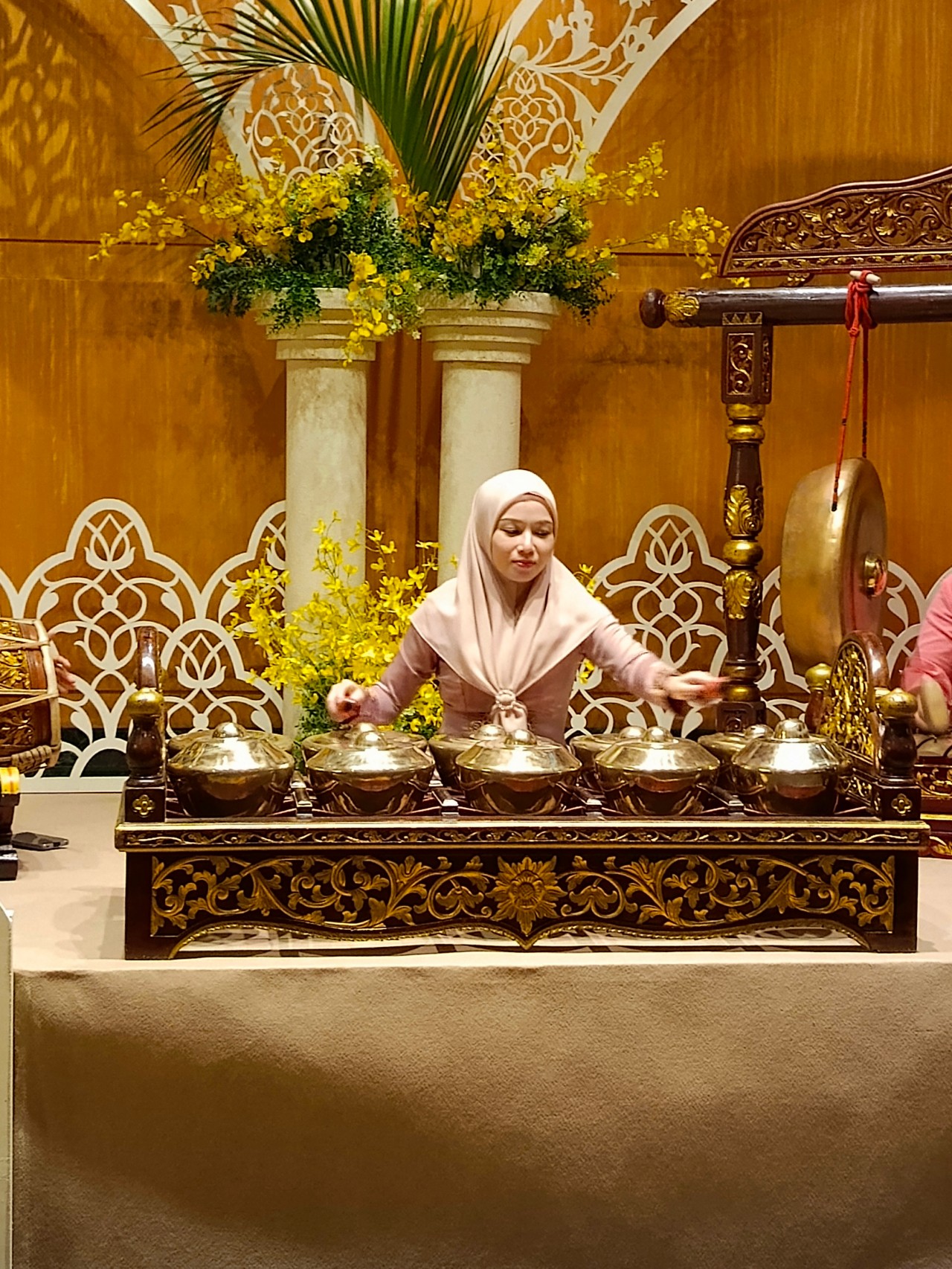

The Infinix Note 40 Pro+ 5G prides itself in its charging technologies. But what about its camera capabilities? Well, here’s a quick round-up of the many photos we took around the time the Note 40 series was launched in Kuala Lumpur Malaysia.

The NOTE 40 Series features a 108MP main shooter with 3x Lossless Superzoom. It also has OIS for steadier shots when taking videos.

The edits applied to the photos here only some resizing and cropping to make the page easier to load. Take a look at all these sample shots

Infinix Note 40 Pro series launch day

Kwai Chai Hong/ ‘Little Ghost Lane’

Petaling Street (Chinatown)

In and around Central Market

Bank Negara Malaysia Museum and Art Gallery

Istana Negara entrance

Merdeka Square

Malaysian Bak Kut Teh and more

Petronas Twin Towers at night

Steady shooter

The Infinix Note 40 Pro+ 5G isn’t a stellar shooter. But at its price point, it’s pretty darn decent for capturing different scenarios. Take these photos into some editing software and you can certainly elevate their look.

The NOTE 40 Pro+ 5G is priced at PhP 13,999. It may be purchased through Infinix’s Lazada, Shopee, and TikTok Shop platforms, where customers can get up to PhP 2,000 off. Additionally, the first 100 buyers can get an S1 smartwatch or XE23 earphones. Alternatively, customers may opt for the Shopee-exclusive NOTE 40 Pro (4G variant) for PhP 10,999.

Get your game on with the Lenovo LOQ 2024. This capable laptop is your entry point to PC Gaming and a lot more.

It comes an absolutely affordable price point: PhP 48,995.

You get capable hardware and the hood to support gaming and more. The Lenovo LOQ 15IAX9I runs on the 12th Gen Intel Core i5 processor and Intel Arc Graphics.

Those are key to bringing unreal graphics to this segment. Supporting latest tech like DirectX 12 Ultimate, players are able to enjoy high frame rates on the Lenovo LOQ.

Creating content? It comes with AI Advantage to help boost performance. Engines and accelerators boost the media processing workloads especially for creatives. It also works with Intel’s X Super Machine Learning, Leading to images that are as close to reality.

The laptop supports a configuration of up to 32GB of RAM and 1TB of SSD Storage.

As for its display, the device has a large 15.6-inch, Full HD panel that is more than enough for gaming, video editing, content consumption, and whatever else you do on a laptop. This display has 144Hz refresh rate, 300 nits brightness, and anti-glare.

Videos come out clear, crisp, and realistic. Audio is punchy and as loud as it gets. Windows Sonic elevates it more when you use headphones. And it just takes a few minutes to render HD videos on editing software.

As it runs on Windows 11, if you are going to use it for work, you can take advantage of various features. The Lenovo Vantage Widget is there for constant reminders, Copilot will help you organize your tasks, and Microsoft Edge is there for casual browsing.

There is an assortment of ports at the back for easy connectivity. And as this is meant for gaming, we put it to the test. Racing that looks better with high frame rate? Check. Shooting titles that require heavy work? Not a problem. You can play all your favorites and not worry about performance.

Best of all, it takes less than an our to juice up this laptop all the way to 100%.

So, whether you’re looking to get started with PC Gaming, or an upgrade for work and entertainment needs, the Lenovo LOQ has you covered.

This feature is a collaboration between GadgetMatch and Lenovo Philippines.

With all the options available in the market, shopping for TVs can get overwhelming.

One brand Michael Josh recommends whenever someone asks? It’s none other than Samsung.

They have TVs for every price point and every feature a user might prioritize.

But which one is right for you?

Keep watching our 2024 Buyer’s Guide to find out the latest Samsung TV that best matches your needs.

-

Events2 weeks ago

Events2 weeks agoStellar Blade: PlayStation taps cosplayers to play Eve for game’s launch

-

Features1 week ago

Features1 week agoFortify your home office or business setup with these devices

-

Gaming2 weeks ago

Gaming2 weeks agoThe Rogue Prince of Persia looks like an ultra-colorful roguelite

-

Accessories2 weeks ago

Accessories2 weeks agoLogitech unveils G Pro X 60 gaming keyboard: Price, details

-

Reviews1 week ago

Reviews1 week agorealme 12+ 5G review: One month later

-

Gaming2 weeks ago

Gaming2 weeks agoLenovo confirms development of a Legion Go 2

-

Deals2 weeks ago

Deals2 weeks agoTCL P635 TV: Big savings for TCL’s anniversary

-

Gaming1 week ago

Gaming1 week agoNew PUMA collection lets you wear PlayStation’s iconic symbols